RISC-V is no longer content to disrupt the CPU industry. It is waging war against every type of processor integrated into an SoC or advanced package, an ambitious plan that will face stiff competition from entrenched players with deep-pocketed R&D operations and their well-constructed ecosystems.

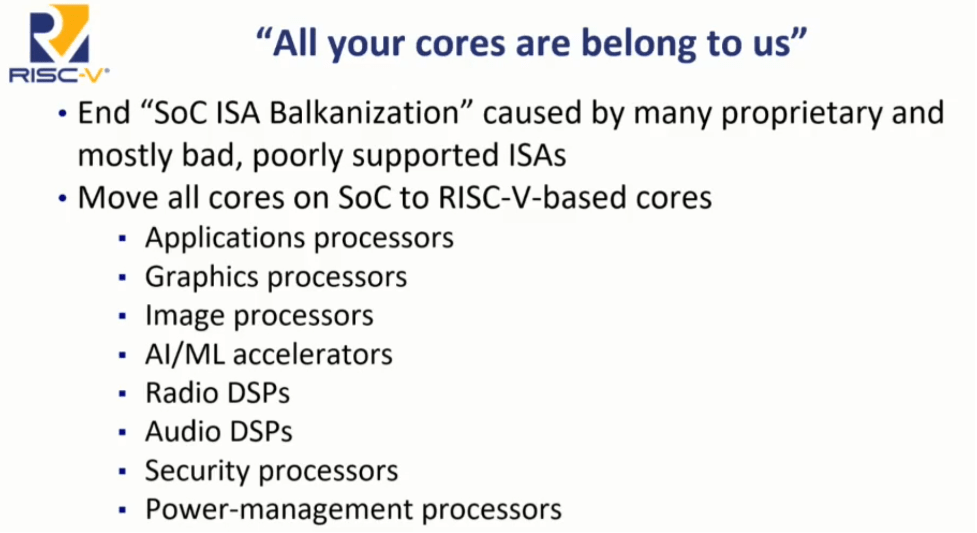

When Calista Redmond, CEO for RISC-V International, said at last year’s summit that RISC-V would be everywhere, most people probably thought she was talking about CPUs. It is clear the organization intends to have RISC-V cores in servers and deeply embedded devices. But the group’s sights are set much wider than that. Redmond implied that every processing core, GPU, GPGPU, AI processor, and every other type of processor yet to be conceived, will be RISC-V based. This was made a little clearer by Krste Asanović, professor at UC Berkeley and chair of RISC-V International, in his state of the union, when he showed the slide below.

Today, that vision is starting to take shape with the work recently completed for security and encryption. Groups are being formed, and donations reviewed, to add support for matrix multiplication, a fundamental capability for GPUs and AI processors.

Behind these bold statements are fundamental shifts in both data and compute architectures. It’s no longer about which company has the fastest CPU, because no matter how well it’s designed, all CPUs have limitations. “In some market verticals, such as 5/6G, inferencing and video processing, they have computational workloads that are no longer viable to process on traditional CPUs,” says Russell Klein, program director for the Catapult HLS team at Siemens EDA. “This is where we are seeing adoption of new computational approaches.”

Almost every application has some form of control structure. “Graphics is a very specific beast with very specific requirements from a memory access perspective,” says Frank Schirrmeister, vice president of solutions and business development at Arteris. “If you look at some of the recent announcements for AI and RISC-V, you have companies announcing processing elements that clearly have ISAs underneath.”

In some cases, those require just the right instructions. “RISC-V has something called vector extensions,” says Charlie Hauck, CEO at Bluespec. “Depending upon how you implement that, you can get something that starts to look pretty much like a GPU in terms of a lot of smaller type units that are operating in parallel, or in a SIMD type of fashion.”

The path is not easy, however. “It’s attractive to add GPU functions to RISC-V architecture by instruction extension because the GPU is playing an important role in AI areas,” says Fujie Fan, R&D director at Stream Computing. “However, we have realized the inevitable problems both in architecture and ecosystem.”

Skeptics abound. The history of processors is littered with failed startups that proclaimed they would crush the competition with their new compute architectures. What many failed to account for is that the competition does not stand still, the compute landscape is undergoing constant and accelerating rates of change, and the pain and expense of moving to new methodologies and tools and training/retraining engineers is far from trivial.

“The value RISC-V brings to adoptees is in the control processing domain, where it has readily available open-source tools, readily available operating systems (Linux or real-time), and the promise of long-term software compatibility/portability delivered through ISA commonality,” says Dhanendra Jani, vice president of engineering at Quadric. “Graphics processing is a very different challenge — a domain-specific processing challenge. To adapt the base RISC-V instruction set into one well-suited to GPU tasks would require significant investment defining custom ISA extensions, building highly complex micro-architecture changes, and performing major surgery on the open-source tools such that they barely resemble the original. In doing so, almost all the inherent value of using RISC-V is washed away through extensive customization. You would lose most of the upside while potentially being shackled to core ISA features that limit usefulness in the domain-specific GPU context. In short, what’s the point of starting with RISC-V instead of a clean sheet of paper?”

So what is the plan for RISC-V? “Vectors, which are SIMD operations, enable you to do an operation on multiple pieces of data simultaneously and have the chip figure out the best way to bring things in from memory, process the single instruction, and then put things back into memory, or move them on to the next operation,” said Mark Himelstein, CTO for RISC-V International. “The basic thing that’s missing is matrix multiply. We have received multiple proposals, one of which is like the vector extensions that fits inside a 32-bit instruction. That is very difficult and requires setup instructions. You set up things like the stride and the masks, and then you pull the trigger and do the operation. But if you want to be competitive with larger matrix implementations on other architectures you have to go to a wider 64-bit instruction. This is what a lot of people are talking about.”

The question is how much of the complexity is exposed, and how much remains hidden. “The ISA is a key component,” says Anand Patel, senior director product management for Arm‘s Client Line of Business. “However, the complexity of GPUs is most often abstracted by standard APIs such as Vulkan or OpenCL. This makes it easier for developers to target across multiple vendors while leaving the lower-level optimization to the GPU vendor. Even in GPGPU-type applications, the architecture of GPUs is fast evolving to keep pace with the new and emerging use-cases such as AI processing, and it is therefore crucial developers have access to a mature software ecosystem to keep up with these changes. Standard APIs ensure the developer does not have to worry about ISA changes, but transparently see the benefit of these underlying improvements.”

Macro-architecture and micro-architecture

It is important to separate the two concerns, because RISC-V only defines the macro-architecture and leaves all micro-architectural decisions to the implementer. When moving beyond a CPU, this becomes a much bigger issue. “Von Neumann is restrictive in some ways, but how a particular implementation interacts with memory is not dictated by RISC-V,” says RISC-V’s Himelstein. “Most GPU implementations optimize this with memory in a multi-stage pipe. You have some stuff coming in from memory, while some operations are going through. When you start looking at GPUs, you talk about exposing that memory interaction. We do have some restrictions about the order things occur in, because you want to make sure that operation is well defined.”

There are many ways to look at a problem. “The most advanced GPU products can be divided by traditional graphics processing and modern AI accelerating,” says Stream’s Fan. “The former is more like a programmable ASIC, rather than a general-purpose processor, where the core competence comes from the implementation of streaming processors rather than the ISA. The instruction set is usually invisible to programmers and always takes a back seat. The design of a graphics processor is strongly correlated to micro-architecture and is suitable to be implemented with customized instructions. For most of us, the standardizations of AI and multimedia capabilities are more attractive. To achieve such capabilities, copying the GPU is not the only way. For RISC-V, multimedia functions can be implemented by vector architecture, and AI capabilities can be implemented by more efficient heterogeneous architecture with matrix accelerators.”

Some aspects change if you expect external programmers to write software for your device. “Dataflow processing can be done in a couple of ways,” says Siemens’ Klein. “One is to use a pipeline of small general-purpose processors, or even specialized processors, each working on one stage of a problem. This is significantly faster and more efficient than a single big CPU. Using programmable processors as the compute elements retains a great deal of flexibility, but does give up some performance and efficiency. This approach can really be built out of any capable many-core processor. The problem is the approach has been soundly rejected by the software development community. They are loath to give up their single-threaded programming model.”

This is a big problem for many companies. “If you’re looking at a general-purpose processor, depending upon the application requirements, it could be anything from a single-issue, two- or three-stage microcontroller all the way up to a multi-issue superscalar design running multi-cores,” says Bluespec’s Hauck. “Alternatively, you see people with 4096 RISC-V processors, each of them small, reduced RV32I-type things that are pulled together in a particular system architecture and interconnect that enables these things to act in the spirit of a GPU. They consist of a lot of smaller integer units that are collaborating together on a big job. The challenge is how do you develop software for that?”

With greater flexibility, new approaches may be called for. “In a large HPC, if you’re running a workload that is more data center-oriented, it’s got a certain set of characteristics. But if your application is a scientific one, maybe there are some capabilities around loads and stores and multiple mathematical-type operations they can be extended,” says Andy Meyer, principal product marketing manager for Siemens EDA. “There are some challenges in the ecosystem if people choose that route. The big area of growth is in hyperscaler applications. If you look at the amount of the venture capital money, they’re clearly solving a unique problem.”

Software and ecosystem

Hardware/software co-design has been a goal for several decades, and RISC-V is one of the few areas in which any notion of progress has been seen. “Traditional data processing designs go to great lengths to separate hardware and software,” says Klein. “The hardware is created and then the software folks get turned loose on it. The assumption is that if the hardware is sufficiently general, then the software will be able to do whatever it needs to do to deliver on the system’s functionality. If you have enough margin in your compute capability and power consumption, then this works. I won’t say it works great, but it works, despite being pretty wasteful.”

Domain-specific computing starts to change that. “To really take advantage of the potential of dataflow processors means customization for the specific application,” adds Klein. “This means that the hardware and software teams need to work together to achieve success. This makes a lot of organizations and design teams really uncomfortable.”

Sometimes co-design is the only way. “Suppose you need to do some processing at the edge,” says Bluespec’s Hauck. “There’s always going to be form factor, size, or power limitations. There’s no amount of software innovation that’s going to get you anywhere. If you’ve got a software stack, the stack is what it is. You’re not going to be able to software-optimize your way to any particular solution that has those types of constraints. You have to go in the hardware.”

When an embedded system is being created, there is less likelihood for the processor to be exposed to a wide programming audience, and many more optimizations become possible. “Consider the vector crypto work that has been done,” says Himelstein. “Nobody is going to program vector crypto in their programs. It’s just not what they do. What they do is they use libraries, like libSSL or some of the other crypto libraries, and they use these instructions. Sometimes they use them by going into assembly language, and then they present a C, or C++, or Java interface, so that programs, applications, can take advantage of them.”

When general programming is required, it becomes a lot more difficult. “If you look at the ecosystem for the GPU, the toolchain is controlled by NVIDIA,” says Fan. “Other competitors, including AMD, have tried to break the monopoly but failed. It’s almost impossible to be compatible with a continuously updated NVIDIA ecosystem by extending RISC-V’s standard instruction set. On the other hand, it’s also hard to make a fresh start, because NVIDIA has the first-mover advantages.”

Time to success

Still, RISC-V is all about enabling innovation. “A lot of what we see in terms of why legacy solutions are the best solutions right now are historical,” says Hauck. “The place for smart architects and smart software developers to really exercise their expertise is going to be a RISC-V type environment.”

It starts with a communal need. “If there’s a need, people will come together and collaborate, and RISC-V is about collaboration,” says Siemens’ Meyer. “You see example after example of that, with all the different initiatives and consortiums that are happening around the world. The ecosystem will evolve, but there’s a balance between the commercial side of things and supporting the community.”

That can create some business challenges, particularly when return on investment is drawn out. “It’s going to be a while before RISC-V catches up and can compete with an established product and ecosystem,” says Hauck. “But you’re going to start to see, for certain applications, that there is no reason why a RISC-V processor, given the right company behind it, can’t succeed. There are a lot of great software developers out there. Eventually they will get there because the community has got all the tools they need to innovate.”

So how long will it be before we see RISC-V GPUS and AI processors? “If you want something that has a reasonable complement of AI features for a non-GPU kind of world, then you already have that today,” says Himelstein. “But a full complement with a ratified matrix and all the other things that these groups have been asking for is probably going to show up in about a year and a half for the basic stuff, and then the advanced stuff probably in three to four years.”

An incremental approach allows pieces to be used a lot quicker. “It is much better to standardize each of the GPU capabilities respectively, rather than standardize the whole GPU product,” says Fan. “As for AI capabilities, we think the ongoing RISC-V matrix extension is a better choice for IC designers.”

Related Reading

Is RISC-V Ready For Supercomputing?

The industry seems to think it is a real goal for the open instruction set architecture.

The Uncertainties Of RISC-V Compliance

Tests and verification are required, but that still won’t guarantee these open-source ISAs will work with other software.

Selecting The Right RISC-V Core

Ensuring that your product contains the best RISC-V processor core is not an easy decision, and current tools are not up to the task.

Source: https://semiengineering.com/risc-v-wants-all-your-cores/