Machine learning (ML) can help companies make better business decisions through advanced analytics. Companies across industries apply ML to use cases such as predicting customer churn, demand forecasting, credit scoring, predicting late shipments, and improving manufacturing quality.

In this blog post, we’ll look at how Amazon SageMaker Canvas delivers faster and more accurate model training times enabling iterative prototyping and experimentation, which in turn speeds up the time it takes to generate better predictions.

Training machine learning models

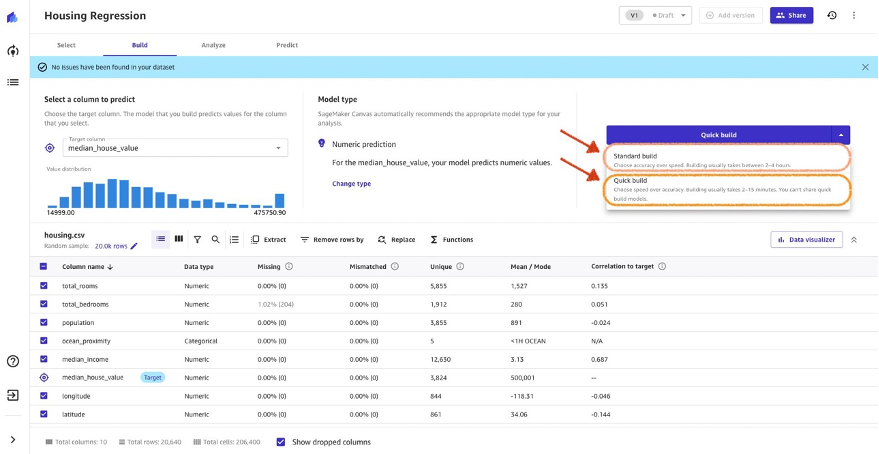

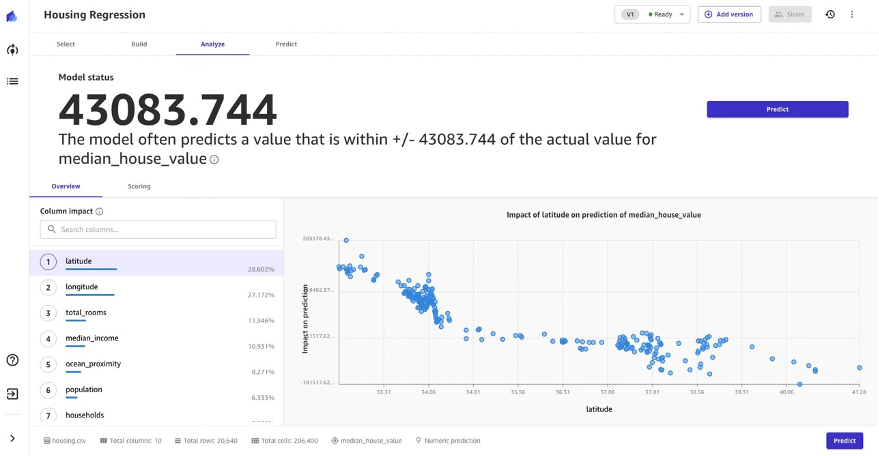

SageMaker Canvas offers two methods to train ML models without writing code: Quick build and Standard build. Both methods deliver a fully trained ML model including column impact for tabular data, with Quick build focusing on speed and experimentation, while Standard build providing the highest levels of accuracy.

With both methods, SageMaker Canvas pre-processes the data, chooses the right algorithm, explores and optimizes the hyperparameter space, and generates the model. This process is abstracted from the user and done behind the scenes, allowing the user to focus on the data and the results rather than the technical aspects of model training.

Faster model training times

Previously, quick build models took up to 20 minutes and standard build models used to take up to 4 hours to generate a fully trained model with feature importance. With new performance optimizations, you can now get a quick build model in less than 7 minutes and a standard build model in less than 2 hours, depending on the size of your dataset. We estimated these numbers by running benchmark tests on different dataset sizes from 0.5 MB to 100 MB in size.

Under the hood, SageMaker Canvas uses multiple AutoML technologies to automatically build the best ML models for your data. Considering the heterogeneous characteristics of datasets, it’s difficult to know in advance which algorithm best fits a particular dataset. The newly introduced performance optimizations in SageMaker Canvas run several trials across different algorithms and trains a series of models behind the scenes, before returning the best model for the given dataset.

The configurations across all these trials are run in parallel for each dataset to find the best configuration in terms of performance and latency. The configuration tests include objective metrics such as F1 scores and Precision, and tune algorithm hyperparameters to produce optimal scores for these metrics.

Improved and accelerated model training times now enable you to prototype and experiment rapidly, resulting in quicker time to value for generating predictions using SageMaker Canvas.

Summary

Amazon SageMaker Canvas enables you to get a fully trained ML model in under 7 mins, and helps generate accurate predictions for multiple machine-learning problems. With faster model training times, you can focus on understanding your data and analyzing the impact of the data, and achieve effective business outcomes.

This capability is available in all AWS regions where SageMaker Canvas is now supported. You can learn more on the SageMaker Canvas product page and the documentation.

About the Authors

Ajjay Govindaram is a Senior Solutions Architect at AWS. He works with strategic customers who are using AI/ML to solve complex business problems. His experience lies in providing technical direction as well as design assistance for modest to large-scale AI/ML application deployments. His knowledge ranges from application architecture to big data, analytics, and machine learning. He enjoys listening to music while resting, experiencing the outdoors, and spending time with his loved ones.

Ajjay Govindaram is a Senior Solutions Architect at AWS. He works with strategic customers who are using AI/ML to solve complex business problems. His experience lies in providing technical direction as well as design assistance for modest to large-scale AI/ML application deployments. His knowledge ranges from application architecture to big data, analytics, and machine learning. He enjoys listening to music while resting, experiencing the outdoors, and spending time with his loved ones.

Meenakshisundaram Thandavarayan is a Senior AI/ML specialist with AWS. He helps hi-tech strategic accounts on their AI and ML journey. He is very passionate about data-driven AI.

Meenakshisundaram Thandavarayan is a Senior AI/ML specialist with AWS. He helps hi-tech strategic accounts on their AI and ML journey. He is very passionate about data-driven AI.

Hariharan Suresh is a Senior Solutions Architect at AWS. He is passionate about databases, machine learning, and designing innovative solutions. Prior to joining AWS, Hariharan was a product architect, core banking implementation specialist, and developer, and worked with BFSI organizations for over 11 years. Outside of technology, he enjoys paragliding and cycling.

Hariharan Suresh is a Senior Solutions Architect at AWS. He is passionate about databases, machine learning, and designing innovative solutions. Prior to joining AWS, Hariharan was a product architect, core banking implementation specialist, and developer, and worked with BFSI organizations for over 11 years. Outside of technology, he enjoys paragliding and cycling.